The Science of Trinity

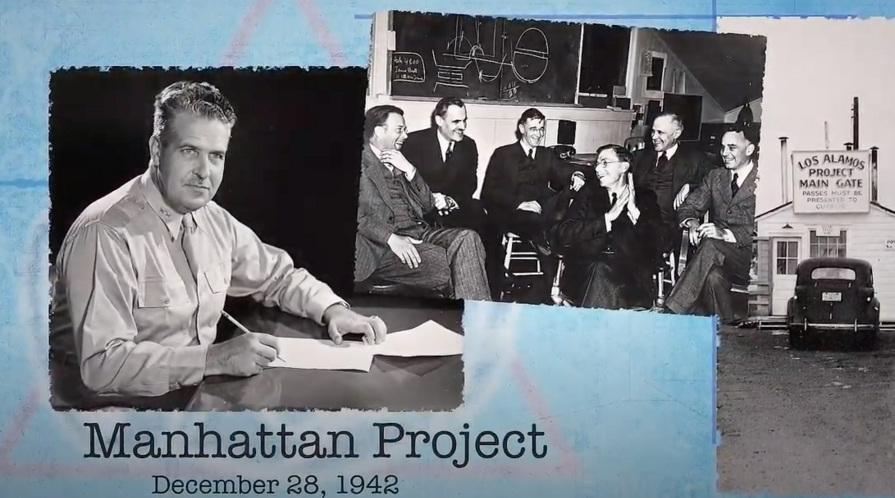

Seventy-five years ago, before 5:30 a.m. on July 16, 1945, Los Alamos scientists successfully conducted the world’s first nuclear weapons test. The test, which physicist J. Robert Oppenheimer named "Trinity" after a line from a poem by John Donne, altered the course of World War II, changed the way scientific discoveries are pursued, and cemented the relationship between science and national security.

Siegfried Hecker, a senior fellow at Stanford’s Center for International Security and Cooperation, worked at Los Alamos National Laboratory for over three decades and served as director for nearly 12 of those years. He joined CISAC in 2005 and served as the Center’s Science Co-director from 2007 – 2012.

In a video produced by Los Alamos to commemorate the historic events of 1945, Hecker reflects on the meaning of that moment. Here, Hecker answers questions to place those events into context of today’s national security landscape and his current work.

As you say in the video, the Trinity project brought scientists from all over the world to Los Alamos and asked them to collaborate on the most sensitive project for the American government. At the time, that must have seemed radical, but did multidisciplinary, international collaboration become the norm?

It may seem odd, but it would be more difficult today than it was then. The U.S. was at war and concerned about Hitler’s Germany winning the race to the atomic bomb. It was actually the Brits that tried to convince President Roosevelt to mount a major effort to build the bomb. It was Europe that was the center of great physics at the time and it was Hitler who caused many of the best scientists to flee Europe and come to the United States – we welcomed them with open arms. We had the industrial capacity to mount such an enormous enterprise and did not have the enemy at our doorstep. But we needed their scientific skills and could not have developed the bomb in 27 months without them. Unfortunately, today we have retreated to more of a bunker mentality and are not as welcoming as we were then. For that matter, we’re not as welcoming as we were in 1956, when America allowed me to immigrate from Austria.

The scientists involved in this project had the agility to switch designs as they made new discoveries. Could you describe the type of talent and skills that allowed them to pursue new ideas so quickly?

Success of the Manhattan Project is typically viewed as the work of physicists. But it was really an incredible array of talent – spanning physics, chemistry, mathematics, computing, engineering, materials and others, that allowed it to deal with surprises like the gun-assembly not working with plutonium. That collaboration also allowed the team to redirect its energy when they found out that although plutonium may have been the physicist’s dream, it was an engineering nightmare. The metallurgists found a fix by adding a bit of gallium as I explain in the video. Understanding why that’s so occupied a good part of my scientific life at Los Alamos.

How does your time at Los Alamos National Laboratory relate to the work you do now with students and pre- and post-doctoral fellows at CISAC?

Once the Soviet Union dissolved at the end of 1991, I turned much of my attention to working with the Russian nuclear establishment to mitigate the new nuclear dangers resulting from the political chaos. My Russian nuclear colleagues and I captured twenty-plus years of collaboration in our book Doomed to Cooperate. It turned out that CISAC became a great place for me to continue this work in 2005 and to expand it to the other nuclear countries around the world. Once at Stanford, I found that one of the most rewarding things I could do was to teach and work with students and post-docs. That’s what I continue to do today in what we call Young Professionals Nuclear Forums. We bring together around a dozen young Americans to work with their counterparts in Russia on nuclear challenges. We do the same with Chinese and American young professionals.

Since its founding, CISAC has always had two directors—one with a science background and the other from the social sciences. As both a former director of CISAC and Los Alamos, can you explain how an academic center like CISAC, with that kind of combined leadership, can help to prepare the next generation of thinkers in international security?

That’s one of the things that attracted me to CISAC. From CISAC’s founding days of John Lewis (political science) and Sid Drell (physics), the Center has tackled problems at the intersection of the natural and social sciences. And, that’s where the hard problems lie. By focusing on the challenges that arise at this intersection, CISAC can help to educate the next generation of national security specialists to tackle the world’s difficult problems. It’s a great place to be if you are interested in international security.

Watch The Science of Trinity

Siegfried Hecker, former director of both Los Alamos National Laboratories and the Center for International Cooperation and Security, reflects on the meaning of the Trinity nuclear weapons test and its implications for national security today.

Dr. Roland Hsu

Dr. Roland Hsu